- Pharmaceutical Technology-03-02-2016

- Volume 40

- Issue 3

Statistical Tools to Aid in the Assessment of Critical Process Parameters

There are many different approaches for assessing process parameter criticality, and assessing which process parameters have a significant impact on critical quality attributes (CQAs) is a particular challenge. Including an unimportant process parameter as a critical process parameter (CPP) in a control strategy can be detrimental. The authors present a statistical approach to determine when a statistically significant relationship between a process parameter and a CQA is large enough to make a practically meaningful impact (i.e., practical significance).

The assessment of critical quality attributes (CQAs) and the control of critical process parameters (CPPs) that affect these attributes are important components of the overall control strategy for drug substance and drug product manufacturing. There are many different approaches for assessing process parameter criticality, and statistics can play an important role in these evaluations. One particular challenge involves assessing when a relationship between a process parameter and a CQA represents a significant impact on that CQA. Assessing impact based solely on statistical significance (p-value) is not appropriate, because statistical significance does not take into account the strength of the relationship relative to the relevant quality requirements and can lead to the inclusion of relatively unimportant process parameters as critical elements of the control strategy. Including these unimportant process parameters as CPPs is undesirable as it effectively dilutes the focus on process parameters that are truly important for ensuring product quality. The excessive assignment of criticality to unimportant process parameters can also place an unnecessary burden on manufacturing operations.

This article introduces a statistical approach to help determine when a statistically significant relationship between a process parameter and a CQA is large enough to make a practical meaningful impact (i.e., practical significance). The assessment of practical significance can then be used to determine if a parameter has a significant impact on a CQA, thus helping to assess the criticality of process parameters. The described statistical methodology is intended to provide a consistent framework for discussions on process parameter criticality and should be used to support but not replace scientific judgment.

The statistical approach that has been developed takes into account the process risk (Z score) and the parameter effect size (20% rule). This article discusses some background on criticality assessments, the motivation behind the development of a new strategy for these assessments, and examples of the implementation of this approach.

Background on criticality assessments

The control of CQAs, critical material attributes (CMAs), and CPPs is an integral component of the overall control strategy for drug substance and drug product manufacturing. The International Conference on Harmonization (ICH) Q8(R2) (1) provides the following definitions:

- CQA is defined as a physical, chemical, biological. or microbiological property or characteristic that should be within an appropriate limit, range, or distribution to ensure the desired product quality.

- CPP is a process parameter whose variability has an impact on a critical quality attribute and therefore should be monitored or controlled to ensure the process produces the desired quality.

ICH Q11 (2) states that: “A control strategy should ensure that each drug substance CQA is within the appropriate range, limit, or distribution to assure drug substance quality. The drug substance specification is one part of a total control strategy and not all CQAs need to be included in the drug substance specification. CQAs can be (i) included on the specification and confirmed through testing the final drug substance, or (ii) included on the specification and confirmed through upstream controls (e.g., as in real-time release testing [RTRT]), or (iii) not included on the specification but ensured through upstream controls.”

In addition, ICH Q11 states that: “Impurities are an important class of potential drug substance CQAs because of their potential impact on drug product safety. For chemical entities, impurities can include organic impurities (including potentially mutagenic impurities), inorganic impurities (e.g., metal residues), and residual solvents.”

The term CMA is not defined in the glossary of ICH Q8, but ICH Q11 clarifies that material attributes can be intermediate, reagent, solvent, or starting material attributes. It is appropriate to use the term CMA for any of the starting material or intermediate attributes that have an impact on a drug substance CQA (particularly in the case where a specific CQA/impurity in the drug substance is derived from a different chemical species upstream).

Based on the above definitions and ICH Q8/Q11 guidance, the criticality of process parameters should be assessed for their impact on the CQAs and CMAs. Upstream/intermediate specifications can include quality attributes that are not part of the control strategy for CQAs but are included for monitoring and trending purposes only.

Assessment of process parameter criticality

Process parameter criticality can be evaluated using risk assessments, experimental investigation, or a combination of these two approaches. The determination that parameters are critical or non-critical by risk assessment is relatively straightforward--if established science, knowledge, or data clearly indicates that a parameter will or will not have a significant impact on a CQA, criticality can be assigned. For many parameters, however, the risk assessment will indicate that experimental investigation is required to assess criticality (3).

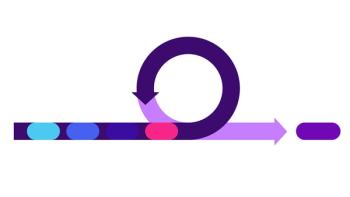

In cases where data are available from multivariate and/or univariate design of experiments (DoEs), these data can be used to help assess process parameter criticality (see Figure 1). The first question to consider is whether a parameter has a statistically significant relationship to a CQA. If so, the practical significance of this relationship should be assessed. If the parameter is considered to have a practically significant relationship to a CQA, then the parameter should be considered critical. If the parameter is determined to not have a statistically significant relationship to a CQA, or it is determined to have a statistically significant, but not a practically significant relationship to a CQA, the team should still review the control strategy holistically, prior to assessing the parameter as non-critical. In this review of the control strategy, the team should evaluate the established science related to this process parameter and consider whether promotion of the parameter to critical would be beneficial to the overall control strategy. Promotion of a parameter from non-critical to critical during this final review process would not be expected to be a common occurrence.

Statistical approach for practical significance

Using statistical significance as the definition of practical significance is not appropriate, because statistical significance does not take into account the strength of the relationship relative to the relevant quality requirements. An appropriate statistical evaluation of practical significance can take into account the risk of failing a limit/specification and the parameter effect size. For example, the green and blue lines in Figure 2 represent two statistically significant relationships between a process parameter and a response. The acceptable limit for this response is indicated by the red dashed line. The relationship represented by the blue line is practically significant, but the relationship represented by the green line is not, because the effect size is small and all of the results are far below the limit. Therefore, the severity resulting from a parameter effect needs to be considered for CPP assessment just as it is contemplated in evaluating quality attribute criticality (3).

A statistical approach has been developed to help determine when a statistically significant relationship between a process parameter and a CQA can be defined as a practically significant relationship. This approach uses two statistical tools (Z score and 20% rule). The concept behind the first tool, the Z score, is shown in Figure 3. Each plot represents the data points from a 20-run multivariate DoE (five parameters included in the study) with an impurity (Area %) on the y-axis and the experiment number on the x-axis. Variability present in the data could come from different sources, including intentional changes in parameters, analytical variability, and the natural variability (i.e., random noise) inherent in the process. If there is too much variability in the data that is not explained by the intentional variation of parameters, the variability should be addressed before proceeding with this analysis.

In Figure 3, the limit for this impurity is indicated by the red dashed line. The data points in (a) are tight and far away from the target, suggesting a low-risk process. Moving any parameter over its experimental range is not going to significantly elevate the risk of failing versus this limit; it is reasonable to conclude that no parameter is practically significant. By contrast, data points in (c) are very close to the limit, therefore, even though the relative variability in the data is the same as in (a), the process is at higher risk. In this case, any statistically significant parameter should be considered practically significant. Data points in (b) are neither far from nor close to the limit. In these cases, the second statistical tool (20% rule) is needed to quantify the individual parameter effect size. This effect size, calculated using the statistical model, can be used to assess practical significance. As introduced conceptually in Figure 3, the distance between the data and the limit plays an important role in process risk evaluation, and the Z score can be used to measure this distance. If x and s are the average and standard deviation of the data respectively, and U is the upper limit of the specification, the Z score can be calculated using Equation 1:

Z = (U–x)/s [Eq. 1]

The Z score evaluates how close the entire dataset is to the limit, without focusing on any individual parameter effects. The value for Z effectively indicates how far the data is from the limit/specification; a large Z score indicates that the data are far from the limit/specification, while a small Z score indicates that the data are close to the limit/specification. Conceptually, the Z score is similar to a process capability index (Cpk)(4); a large Z score indicates a low-risk process and a small Z Score indicates a high-risk process. In this approach, Z score values of two and six are used as the cut-off values for assessing practical significance. In cases where a Z score is larger than six, as illustrated conceptually in Figure 3(a), there are no practically significant parameters. In cases where a Z score is less than two as illustrated conceptually in Figure 3(c), it is generally appropriate to conclude that every statistically significant parameter is practically significant. If a Z score is between two and six, as illustrated conceptually in

Figure 3(b), the individual effect size of statistically significant parameters needs to be quantified and compared to the limit/specification. Here, 20% of the limit/specification range (20% rule) is chosen as the threshold for practical significance. If a parameter effect size is greater than 20% of the limit/specification range, it should be considered practically significant. If the parameter effect size is less than 20% of the limit/specification range, then it is not practically significant. The selection of 20% as the threshold for practical significance is similar to the conventional criteria (0.2-0.3) for a small effect size, using Cohan’s d (5). This procedure for assessing practical significance is outlined in Figure 4. The described approach allows for the rapid and consistent assessment of process parameter criticality. For simplicity, the above discussion of Z scores is focused on one-sided, upper-limit specifications (U). It is worth noting that the approach can be easily extended to one-sided, lower-limit specifications and also to two-sided specifications.

Examples

Two examples are included to illustrate the implementation of the described approach. The first example shows the criticality assessment for six crystallization process parameters in a drug substance intermediate step. The studied parameters were seed temperature, temperature ramp rate 1, temperature ramp 1 end temperature, temperature ramp rate 2, temperature ramp 2 end temperature, and seed loading. These parameters were incorporated into a 26-2 fractional factorial with four replicates, at the target conditions, for a total of 20 experiments. Shown in Figure 5 are the bar charts for one process-related impurity (impurity 1) with a limit of not more than 1.0% at the intermediate step. This impurity is a CMA, as it tracks forward to an impurity (CQA) in the drug substance. The limit was set based on downstream fate and purge data. Statistical analysis revealed that ramp rate 1 and seed loading had statistically significant relationships with impurity 1. Given the calculated average (0.31%), the standard deviation (0.037%), and the limit of 1.0%, a Z score of 18 is obtained. The Z score of 18 is greater than six, and therefore none of the studied parameters have a practically significant relationship with impurity 1.

A second example is shown in Figure 6. This example is based on a measurement of tablet assay, in a 17-run experimental study for a drug product process, with a two-sided specification of 95%-105%. The study was designed to explore the impact of excipients (magnesium stearate quantity, dicalcium phosphate [DCP] source) and process parameters (Comil shear force, pre-blending) on tablet assay. Statistical analysis revealed a significant interaction between DCP source and Comil shear force. The fitted regression model is shown in Equation 2 as follows:

For a two-sided specification, two Z scores are calculated, and the lower of the two values is used to assess practical significance. Given the calculated average (99.24%) and standard deviation (1.64%) for tablet assay, the upper limit and lower limit had Z scores of 3.5 and 2.6, respectively. Both Z scores require additional assessment via the 20% rule to quantify individual parameter effect sizes. For a model with a significant interaction between two parameters, each calculated parameter effect needs to take into account both the main effect and the interaction effect. The statistical model in Equation 2 predicts that the maximum change in assay is 3.36% for Comil shear force and 3.20% for DCP. Given the specification range of 10% (105%-95%) for tablet assay, the 20% threshold is 2.0%. As both effect sizes are greater than 2.0%, Comil shear force and DCP are practically significant.

Summary

In general, it is expected that the evaluation of practical significance presented in the two examples discussed would encompass all relevant experiments that assess a given parameter (i.e., the entire explored range). Given the expectation that criticality assessments should be performed by evaluating effects across the entire explored range, boundaries of the explored ranges should be set in a pragmatic fashion. These explored ranges need to effectively support the development of process understanding and the need for usable ranges in manufacturing, while avoiding unnecessary expansion of the investigation into ranges that would never be considered for manufacturing.

Conclusion

Process parameter criticality can be determined using risk assessments, experimental investigation, established science, or a combination of these approaches. The proposed statistical methodology is intended to provide guidance and a common language to facilitate discussions on process parameter criticality. Criticality is assessed by determining when a statistically significant relationship should be considered a practically significant relationship, which is then used to aid the overall criticality assessment.

Acknowledgements

The authors would like to thank Brad Evans, Greg Steeno, Leslie Van Alstine, Brian P. Chekal, Mark T. Maloney, Shengquan Duan, Steven Guinness, and Ken Ryan for their helpful discussion and feedback during the development of this approach.

References

1. ICH, Q8(R2) Pharmaceutical Development (2009).

2. ICH, Q11 Development and Manufacture of Drug Substances (2012).

3. L.X. Yu et al., The AAPS Journal, 16 (4) 771-783 (2014).

4. D. Montgomery, “Process Capability Analysis”, in Introduction to Statistical Quality Control (John Wiley & Sons, Inc., New York, 3rd ed., 1997), pp. 430-470.

5. J. Cohen, “Analysis of Variance”, in Statistical Power Analysis for the Behavioral Sciences (Lawrence Erlbaum Associates, 2nd ed., 1988), pp. 273-406.

Article DetailsPharmaceutical Technology

Vol. 40, No. 3

Pages: 36–44

Citation:

When referring to this article, please cite it as K. Wang et al., “Statistical Tools to Aid in the Assessment of Critical Process Parameters," Pharmaceutical Technology 40 (3) 2016.

Articles in this issue

over 10 years ago

Gaining Insight from Process Control Dataover 10 years ago

Phase-Appropriate GMPover 10 years ago

How Important is Data Integrity to Regulatory Bodies?over 10 years ago

FDA GDUFA Testimony Presentation Slidesover 10 years ago

Keynote Series Addresses Crucial Industry Issuesover 10 years ago

Polymorph Screening for Identification of Relevant Crystalline Formsover 10 years ago

Building Consensus for E&L Testing Standardsover 10 years ago

Multimode Microplate Reader Improves Sensitivity