- Pharmaceutical Technology-10-02-2014

- Volume 38

- Issue 10

The Role of Normal Data Distribution in Pharmaceutical Development and Manufacturing

Review challenges in the use of normality testing situations and recommendations on how to assess data distributions in the pharmaceutical development manufacturing environment

There are fundamental reasons why the normal distribution is a reasonable approximation in many development and manufacturing settings, but there are also practical reasons why data may not exactly follow a normal distribution. This article provides an overview of both cases. It will focus on content uniformity and provide examples of why this type of data is not necessarily normally distributed. It will also provide insight into why it is easy, even when underlying data are normal, to reject normality via standard statistical tests. The goal of this article is to increase awareness of the concept of normality within the pharmaceutical industry and detail its potential limitations.

Why assume normality?

Statisticians frequently assume that data follow a normal distribution when developing statistical methods and performing practical data analysis. In most introductory statistics textbooks, the methods presented assume the data follow a normal distribution. Why is this distribution so heavily used?

First, an immense amount of data from many differing fields and applications displays the approximately bell-shaped, single-peak distribution. In these cases, the data seem to center at a particular value (mean) and spread out relatively evenly on both sides of the center with most data congregating near the central value.

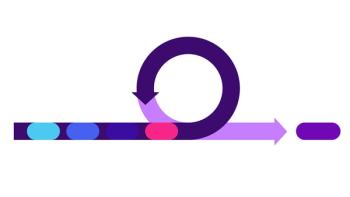

The second reason that the normal distribution is used is that most statistical methods employ some level of summing or averaging of the individual data points. A useful theorem in statistics, *The Central Limit Theorem, states that sums and averages tend to follow a normal distribution, no matter what distribution the individual data values come from. For a real-life example, consider rolling a six-sided die a given number of times and take averages of the results from those rolls. Assume that a die is rolled five times, and the average of the five results (“rolls”) is collected. This process is repeated a large number of times, say 10, or 50, or 500 times. On roll 1, the result is 6, on roll 2 the result is 4, roll 3 produces a 4, roll 4 a 2, and roll 5 is a 3. The sum of these numbers is 19 and the average is 3.8. Although it is equally likely to receive a 1, 2, 3, 4, 5, or 6 from any individual roll of the die, and thus the distribution of any roll is flat or uniform (i.e., not “normal”), the distribution of the five-roll average appears to be unimodal, symmetric, and bell-shaped, approaching “normal” as the process is repeated a large number of times. Figure 1 shows the distribution of each roll, with the resulting distribution of the average when the process is repeated 10, 50, and 500 times. Note that the uniform nature of the distribution of individual data and the “normal” appearance of the distribution of the averages hold and are easier to visualize as the sample size increases (1).

This theorem is the main reason that statistical methods that are based on assuming a normal distribution are quite robust to departures from this assumption. In other words, even if the underlying data do not exactly follow a normal distribution, the statistical methods work reasonably well on the sums/averages that are often being analyzed statistically. For a more detailed explanation of the graphs used in Figure 1, see the next section.

The final reason assuming normality is so common is that this distribution has practical properties that allow for useful interpretation of data. For example, if data are approximately normal there is a reasonable expectation of where future data should fall relative to the mean of the current data. A common tool in practical data analysis is to calculate “three sigma limits” to understand where to expect the future data to range. Almost all future data will lie within the three sigma limits of the mean since 99.7% of data from a normal distribution should be within these limits.

What are the methods to determine normality?

Graphical tools are one of the best ways to determine if data follow an approximate normal distribution (2, 3). They also have the additional benefit of highlighting other possible features in a set of data such as outliers. As John W. Tukey (4) said, “The greatest value of a picture is when it forces us to notice what we never expected to see.” The histogram, boxplot, and normal probability plot are three graphics that can be used when assessing the distribution of a set of data.

- Figure 2, left panel, shows a histogram of some typical content uniformity data. In this graph, the length of the bar shows the number of data values (x-axis) that fall in the range of the bar indicated on the y-axis. From this graph, the distribution of data looks relatively normal with perhaps a longer “tail” on the lower side of the mean than on the upper side.

- Figure 2, middle panel, is a boxplot of the same content uniformity data shown on the left. The data set is represented by a box and lines that extend past the top and bottom of the box. The line across the middle of the box is the median (middle value) of the data set. The top of the box is the 75th percentile (75% of the data are below the top of the box) and the bottom of the box is the 25th percentile. The box encompasses the middle 50% of the data. If the data follow an approximately normal distribution, the median should be near the center of the box and the lines extending out from the box should be roughly the same length. The difference between the 75th and 25th percentiles is called the interquartile range. The lines coming out of the box at the top and bottom extend to the largest and smallest data values that are within 1.5 times the interquartile range from the box. If there are values beyond that limit, they are plotted as individual values (stars in Figure 2). Note that two values are plotted as stars on the bottom indicating that these values are worth investigating to make sure they are truly a part of the data distribution.

- The final graphical tool for assessing the distribution of a set of data is the normal probability plot (i.e., normal quantile plot). Figure 2, right panel, shows the content uniformity data in a normal probability plot. The x-axis scale represents the percentiles of a normal distribution. When data are plotted against this scale, the points should fall along a straight line if the data are normally distributed. It is not essential that the data form a perfectly straight line, only that they be close. One practical approach is to imagine that the graph is on a piece of paper and that a fat pencil is placed on the graph. This fat pencil should be able to cover most of the points if the data are reasonably approximated by a normal distribution.

Statistical methods are also available to perform a statistical hypothesis test for concluding (at a pre-determined level of statistical confidence) that the data do not follow a theoretical normal distribution. The common tests available in menu-driven statistical software are the Anderson-Darling, Shapiro-Wilk, and Kolmogorov-Smirnov tests. Each of these tests is optimal under certain data situations such as large and small sample sizes. These tests should be used with caution as several data conditions other than not following a normal distribution can cause these tests to be statistically significant (this is discussed in the next section). It is also important to remember that these statistical hypothesis tests cannot “prove” that the data are normally distributed; they can only provide evidence that a set of data does not follow a normal distribution (when the p-value* associated with the test is statistically significant). If the sample size is small, these tests may not be powerful enough to detect important departures from normality. If the test is statistically significant, the test does not reveal why the data “failed” normality testing. The graphical approaches discussed above provide essential understanding of the data distribution in a way hypothesis testing can never approach as it is possible to easily find relevant features in the data (e.g., outliers, multiple peaks).

Why might the normal distribution not always hold?

Similar to the process of rolling a die five times, pharmaceutical manufacturing processes and the data that result from the processes are usually the combination of at least several sub-processes. In producing a tablet, the sub-processes might occur as: blend, mill, blend, roller compact, mill, and compress. These sub-processes create in-process material distributions, each of which is not necessarily normally distributed. Regardless of the individual distributions of these sub-processes, if there are several, and their variability is of similar magnitude, the final data will be normally distributed. This may seem like magic but Figure 3 provides illustrative proof. The example distributions (Weibull, Uniform, Triangular, Beta, and Gamma) are not meant to be representative of actual manufacturing distributions; they were selected to illustrate that the mathematical principle works even when using these “non-normal” distributions. To interpret Figure 3, assume that the pre-blend process acts on the material, producing a result that over time, would follow a Weibull distribution. The material is then passed to the mill, and the mill-blend process acts independently of the pre-blend process, and adjusts the material per a uniform distribution. The material is then passed to the roller compactor that acts independent of the previous processes and adjusts the material in a triangular fashion. The final mill creates an adjustment distributed as a beta and the compression process as gamma. If several lots would be made in this fashion, those independent data distributions would result in a normal distribution of the final product. Several hundred lots are displayed in Figure 3. A true departure from normality may occur in a number of ways:

Number of sub-processes and/or magnitude of variation. If there are not a sufficient number of sub-processes or the magnitude of variability from one sub-process overwhelms the others, then the final result may not be normal. This might cause a skewed distribution, a distribution with thicker “tails” than a normal distribution, a bi-modal distribution, or any number of distributions. Figure 4 displays a simulated process with a resulting distribution that is non-normal.

A non-normal distribution could occur in tablet manufacturing in several ways. For example, if in a granulated product, the particle size of the active granules is greater than the excipient particle size, then there may be segregation at the end of the batch. If the data are collected in a stratified fashion throughout the run, then the potency data will result in a skewed right distribution. Another example is when blend segregation occurs during initial discharge of the bin and/or during compression; in this case, there might be lower/higher potency values at start and/or end of run resulting in a skewed distribution or thick tails.

These types of phenomena are studied and minimized during development, but it may not be possible to eliminate them completely. Here, process knowledge and process control are essential, not the normality of the data distribution. A process with slightly heavier tails but a narrow range of content uniformity results would certainly be more desirable to the patient than a process that follows a normal distribution but has a larger range of results. Failing a test of normality does not automatically imply poor quality product.

Truncated normal. In this case, the data may have been truncated due to a natural or “person-made” limit. An example of data truncation can occur as the result of weight control of tablets during compression. If the process is actively adjusting tablet weights during manufacturing, then the resulting weight data (and hence potency and other responses) will become limited and result in a truncated, non-normal, distribution. Figure 5 displays such a result. These data will fail normality, but the result is “better than normal” and better lot quality; lot release should not be affected.

Measurement resolution. It is best to keep the data to as many significant figures as possible for use in data analysis. When the data are subjected to high-level rounding, although the underlying data might be normal, the result may be non-normal data. This degree of rounding not only affects normality, it makes decision-making difficult. The content uniformity data in Figure 6, original and rounded, clearly show that the uniformity results in the “tenths” place pass the Anderson-Darling normality test, but fail the test when the data are rounded to the “ones” place. The normal probability plot shows the evidence of rounding and that the underlying distribution is most likely normal given how the points surround the line evenly.

Outliers. Outliers in a dataset are another reason that a normality test can be statistically significant (i.e., have a p-value that is small, usually less than 0.05 or 0.01) even if the rest of the data is normal. The normal plot and the boxplot are excellent graphs for revealing outliers. In content uniformity, when outliers are found, it is important to determine the reason for these results or the sources of variability.

The variability in content uniformity data can be attributed to the raw materials, the manufacturing process, and the analytical method. For example, “process-caused” outliers in potency/content uniformity data might be the result of drug substance agglomeration. The ability of a drug product to agglomerate should be studied in development and minimized in manufacturing. However, if minor agglomeration is found in the final product sample, the resulting distribution may fail normality testing and/or may intermittently have outliers that are well within the specifications. Also, “analytical method-caused” outliers could occur when content uniformity data are analyzed by near infrared (NIR). In analyzing content uniformity data by NIR, a mathematical model is developed. If there are changes to a physical property of the material that is not accounted for in the NIR model, the model will not predict well. This poor prediction might result in abnormally large observations, or outliers in the data. Such model prediction errors can typically be detected with the use of spectral matching algorithms prior to prediction of content and inform the analyst about the need for model improvement. Still, the true underlying tablet potency distribution may be unchanged and normal.

Correlation of the data over time. Figure 7 contains developmental content uniformity data from a single compression run plotted in the order it was manufactured. The results display that the data are time-dependent or autocorrelated. This means that the current content uniformity result is somewhat dependent on what happened during the time period just before it. This time-ordered dependency is found in almost all manufacturing processes and may be induced by factors such as common raw materials, environmental conditions (relative humidity and temperature), and feedback control of the process. The data in Figure 7 do not pass a statistical test of normality. The data cover a narrow range, 95-105% label claim, and represent excellent control of content uniformity. Failing the normality test does not imply poor quality.

Large sample size. For large samples, it is possible to find very small departures from exact normality using statistical tests. The dice example from Figure 1 clearly shows the appearance of the data as more bell-shaped, unimodal, symmetric, and “normal” as the sample size increased. Yet, one would accept the assumption of normality (p = 0.85 for Sharpiro-Wilk and p = 0.85 for Anderson-Darling) for the sample of size 10 and would reject normality (p = 0.013 for Sharpiro-Wilk and p < 0.005 for Anderson-Darling) for the sample of size 500. This is a general property of statistical hypothesis tests. All else being equal, as the sample size increases, the statistical test is able to detect increasingly small differences in the data.

Application to content uniformity testing

As noted in the introduction, there has been much commentary both at conferences and in the literature (5-10) on revising content uniformity tests for small sample sizes and developing content uniformity tests for large sample sizes. In these tests, there are assumptions around normality and/or testing for normality.

How should an understanding of data distributions be used in the development of performance qualification and routine lot release criteria? As shown in the examples presented above, failing a statistical test of normality or having a process that produces data that are not normally distributed does not necessarily affect the quality of the product. As a part of continuous process verification, it is important to evaluate production data to ensure that the data are meeting expectations based on development and past production experience. It is, however, not necessary that the data follow an exact normal distribution. The examples presented provide many real world case studies of why this type of requirement should not be applied routinely.

Knowing that production data may not be exactly normally distributed implies that the lot release or validation acceptance criteria should be developed to be robust to departures from normality. If the criteria require the assumption of normality, the test should use statistical functions such as sums and averages to improve robustness to differing data distributions. Alternatively, a nonparametric test (8, 9) (i.e., one that does not require normality) should be developed. Criteria that utilize statistical functions such as minimums/maximums and the “tails” of assumed probability distributions should be avoided (6). One reasonable approach is to provide both a parametric (assumes normal distribution) and nonparametric test such as the European Pharmacopoeia content uniformity procedures for large sample sizes (5, 10).

Conclusion

Although it is reasonable to expect that the underlying content uniformity data is normal, there are several reasons why the data might not be normal or might be normal and fail a test for normality. These departures from normality do not necessarily affect the quality of the product. As a result, the distribution of the data should be understood as part of process understanding and continuous improvement. The data should not be tested for normality as part of lot release.

It is prudent to develop lot acceptance criteria (e.g., compendial or release tests) that are robust to moderate departures from normality. Or, if testing for normality is a requirement for using the test, then there should be options for non-normal data as well.

Acknowledgement

The authors would like to thank Stan Altan (Janssen), Anthony Carella (Pfizer), Tom Garcia (Pfizer), Jeff Hofer (Eli Lilly), Sonja Sekulic (Pfizer), and Gert Thurau (Roche) for their careful review of this document.

References

1. NIST, “Engineering Statistics Handbook,” accessed Sept. 1, 2014.

2. S. Shapiro, How to Test Normality and Other Distributional Assumptions, Volume 3 (ASQC, 1990).

3. Henry C. Thode, Testing for Normality (CRC Press, 2002).

4. John W. Tukey, Exploratory Data Analysis, (Addison-Wesley, 1977).

5. European Pharmacopoeia Supplement 7.7, General Chapter 2.9.47 Uniformity of dosage units using large sample sizes (EDQM, Council of Europe, 6. Strasbourg, France).

6. M. Shen and Y. Tsong, 37 (1) Stimuli to the Revision of the USP: Bias of the USP Harmonized Test for Dose Content Uniformity.

7. W. Hauck et. al, 38 (6) Stimuli to the Revision Process: USP Methods for Measuring Uniformity in USP.

8. D. Sandell et al., Drug Info J, 40 (3) 337-344 (2006).

9. J. Bergum and K. Vukovinsky, Pharm Technol, 34 (11) 72-79 (2010).

10. S. Andersen et al., Drug Info J, 43 (11) 287-298 (2009).

About the Authors

Lori B. Pfahler is director, Center for Mathematical Sciences, Merck Manufacturing Division, 770 Sumneytown Pike, PO Box 4, WP97-B208, West Point, PA 19486, tel.: 215.652.2967, email: [email protected].

Kim Erland Vukovinsky is senior director, Pharm Science & Manufacturing Statistics, Pfizer, MS 8220-4439, Eastern Point Rd., Groton, CT 06340, tel.: 860.715.0916, email: [email protected].

Articles in this issue

over 11 years ago

Ross Agitated Vessel Includes Silicone Heating Blanketsover 11 years ago

Biopharma Manufacturers Respond to Ebola Crisisover 11 years ago

Determining Facility Mold Infectionover 11 years ago

Romaco Kilian's Tablet Press Introduces Continuous Weight Controlover 11 years ago

GEA Pharma Systems Valves Contain Powdersover 11 years ago

Packaging Addresses Cold-Chain Requirementsover 11 years ago

Fluorination Remains Key Challenge in API Synthesisover 11 years ago

Fette Tablet Press Designed for High Productionover 11 years ago

Marrying Big Data with Personalized Medicine